Which of the following algorithms is asymptotically complete?

Random sampling of states

Local beam search

Random walk

Hill climbing with random restarts

1 point

Genetic algorithms are said to jump from one hill to another. Which of the following is responsible for such behavior?

Mutation

Cross over

Fitness function

Natural Selection

1 point

Mona was doing a Hill Climbing procedure to solve a problem. She observed that her procedure often gets stuck in plateaus.. Which of the following additions to the procedure would you recommend to her?

Allow indefinite sideway moves

Allow sideway moves but upto a certain limit (say, 100)

Keep a tabu list of recently visited nodes

Perform a bfs to find the next node with a better objective function when the state is stuck in a plateau

1 point

Which of the following is/are true about local search algorithms?

They are guaranteed to always terminate with a correct solution

They are highly memory efficient compared to tree/graph search methods

They can only solve a certain variety of optimization functions

They don’t make any assumptions on the structure of optimization function

Fischl wishes to solve the 8-queens problem using Hill climbing with random restarts and with no sideway moves allowed. It is known that the probability of a successful run of the hill climb algorithm on this problem is 0.14. On average, how many restarts should she expect? (Round off your answer to the closest integer)

1 point

1 point

The famous FF planner uses which of the following algorithms?

Heuristic Search

Enforced hill climbing

Iterative Deepening Search

Greedy hill Climbing with random restart

1 point

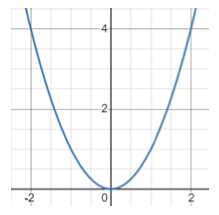

Gradient descent is guaranteed to converge for strictly convex functions assuming the step size λ is sufficiently small. Assume that we have the function y = x2. For what values of λ, will gradient descent converge?

0.1

0.5

1.0

2.5

For Questions 8 and 9

In Simulated Annealing, generally, the temperature is reduced from a positive value to a low value in successive iterations. Assume the following 3 step temperature schedule:

[20, 10, 0]

Assume that we have three states s1, s2, and s3 such that V(s1) = 5, V(s2) = 0, and V(s3) = 10. Successors of a state are chosen uniformly randomly. The successor states are defined as: next(s1) = {s2}, next(s2) = {s1, s3}, next(s3) = {s2}. Assume that s3 is the start state.

Answer the next 2 questions based on this setting.

After the first iteration of simulated annealing, what is the probability that the current state would be s2. Round the answer to three digits after the decimal point.

1 point

What is the probability that when the algorithm ends, we are at the state with the highest value?

Round the answer to three digits after the decimal point.

1 point

1 point

What are the difference(s) between Simulated Annealing (SA) and Genetic Algorithms (GA)

GA maintains multiple candidate solutions while SA does not.

SA is used for minimization problems while GA is used for maximization problems

SA has no parameters to set whereas GA requires you to set multiple parameters such as crossover rate.

GA will always converge to an optimal solution faster than SA on any given problem.

Answer to all these questions are available in our NPTEL Artificial Intelligence Assignment 4 Answer’s Blog.

Click Here to Checkout NPTEL Artificial Intelligence Week 4 Assignment Answers.